To do that, you can use the BashOperator and execute a very simple bash command to either print “accurate” or “inaccurate” on the standard output (simple for now). The last two tasks to implement are is_accurate and is_inaccurate. As we want the accuracy of each training_model task, we specify the task ids of these 3 tasks. I won’t go into the details here as I made a long article about it, but keep in mind that by returning the accuracy from the Python function _training_model_X, we create an XCOM with that accuracy, and with xcom_pull in _choose_best_model, we fetch that XCOM. An XCOM is an object that has a key, serving as an identifier, and a value, corresponding to the value to share. XCOM stands for cross-communication messages, it is the mechanism to exchange data between tasks in a DAG. When you want to share data between tasks in Airflow, you have to use XCOMs. Now, there is one thing we didn’t talk about yet. Here, either is_accurate or is_inaccurate. This function returns the task id of the next task to execute. First, the BranchPythonOperator executes the Python function _choose_best_model. Okay, it’s a bit more complicated so let’s start at the beginning. How? task_a > task_bĬhoosing_best_model = BranchPythonOperator( If you want to say “Task A must run before Task B”, you have to define the corresponding dependency.

Those directed edges correspond to the dependencies between tasks (operators) in an Airflow DAG. Time to understand how to create the directed edges, or in other words, the dependencies between tasks. You know what a DAG is and what an Operator is. To know what parameters your Operator requires, the documentation is your friend □ With the PythonOperator, you must pass the Python script or function to execute, etc. For example, with the BashOperator, you must pass the bash command to execute. The other parameters to fill in depend on the operator. Here is an example of operators: from import BashOperatorįrom import PythonĪn Operator has parameters like the task_id, which is the unique identifier of the task in the DAG.

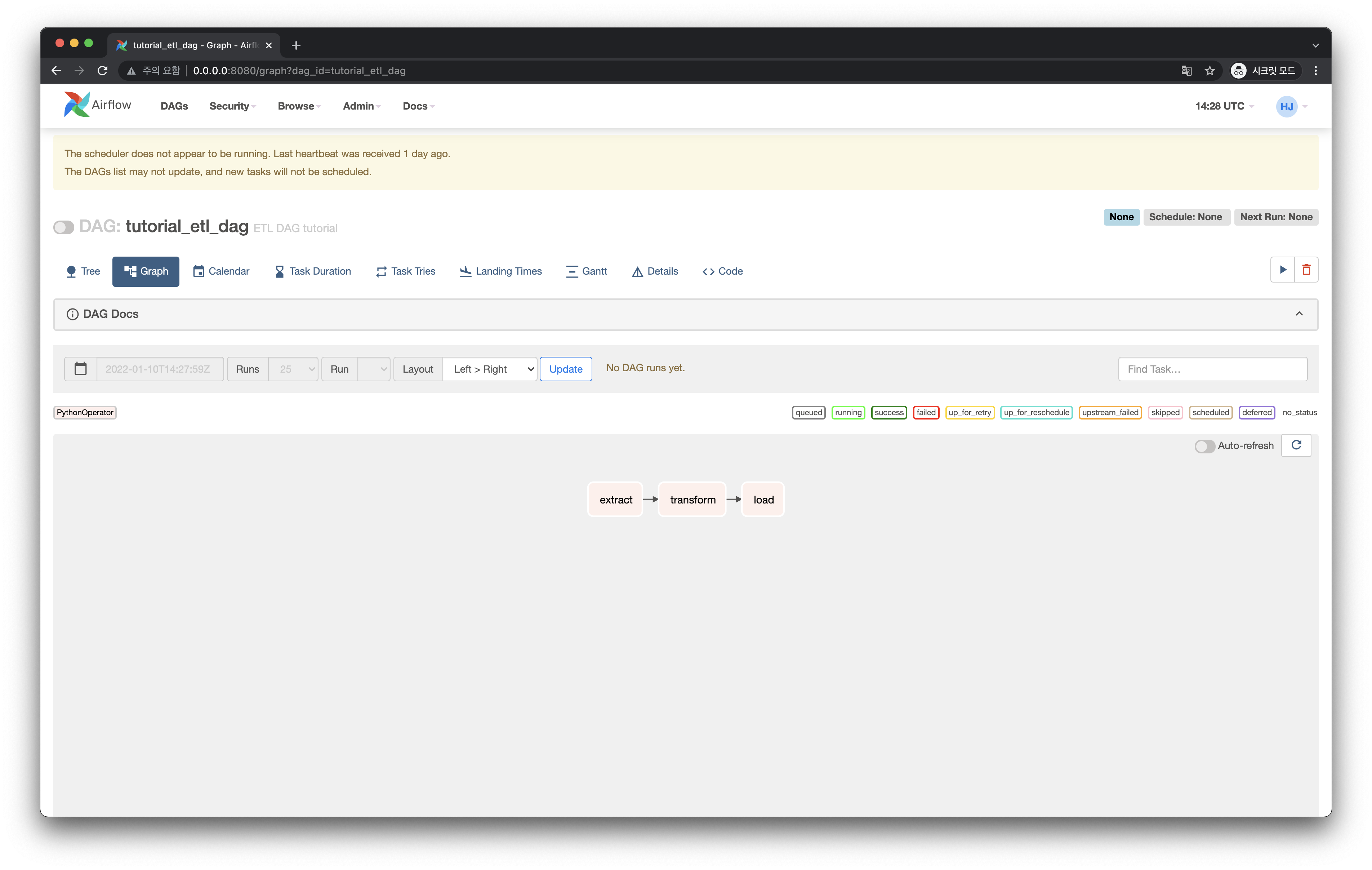

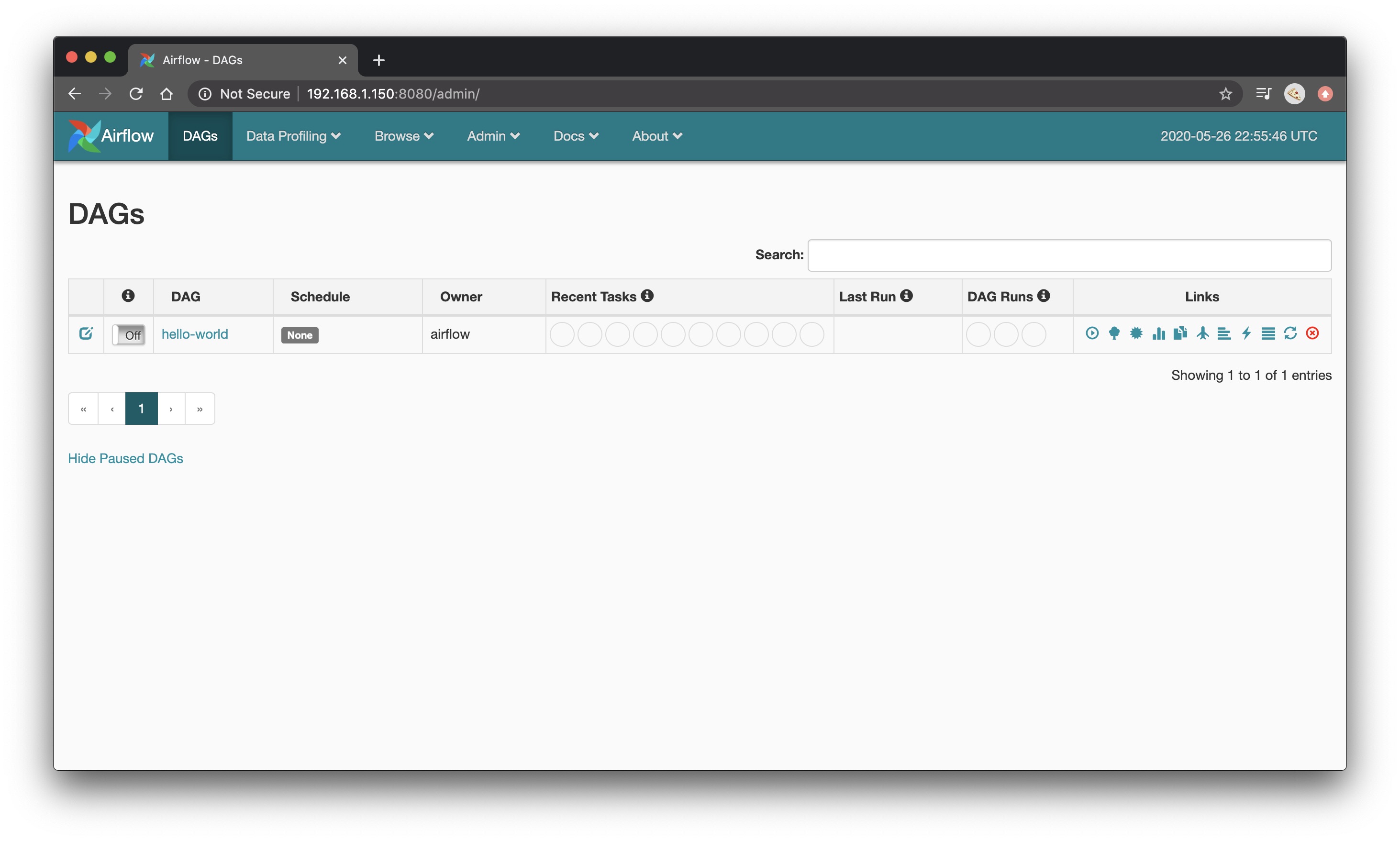

When Airflow triggers a task (operator), that creates a Task instance. Airflow brings plenty of operators that you can find here. If you want to execute a Bash command, you can use the BashOperator, and so on. For example, if you want to execute a Python function, you can use the PythonOperator. An Operator is an object that encapsulates the logic you want to achieve. Ok, once you know what a DAG is, the next question is, what is a “Node” in the context of Airflow? What is an Airflow Operator? Whenever you read “DAG,” it means “data pipeline.” Last but not least, when Airflow triggers a DAG, it creates a DAG run with information such as the logical_date, data_interval_start, and data_interval_end. A DAG is a data pipeline in Apache Airflow. As Node A depends on Node C, which depends on Node B and itself on Node A, this DAG (which is not) won’t run at all. In simple terms, it is a graph with nodes, directed edges, and no cycles.

I know, the boring part, but stay with me, it is essential.ĭAG stands for Directed Acyclic Graph. Well, this is precisely what you are about to find out now! Airflow DAG? Operators? Terminologiesīefore jumping into the code, you must first get used to some terminologies. How can I create an Airflow DAG representing this data pipeline? Now, the first question that comes up is: You could store the value in a database, but let’s keep things simple. Your goal is to train three different machine learning models, then choose the best one and execute either is_accurate or is_inaccurate based on the accuracy score of the best model. Imagine you want to create the following data pipeline:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed